About the Project

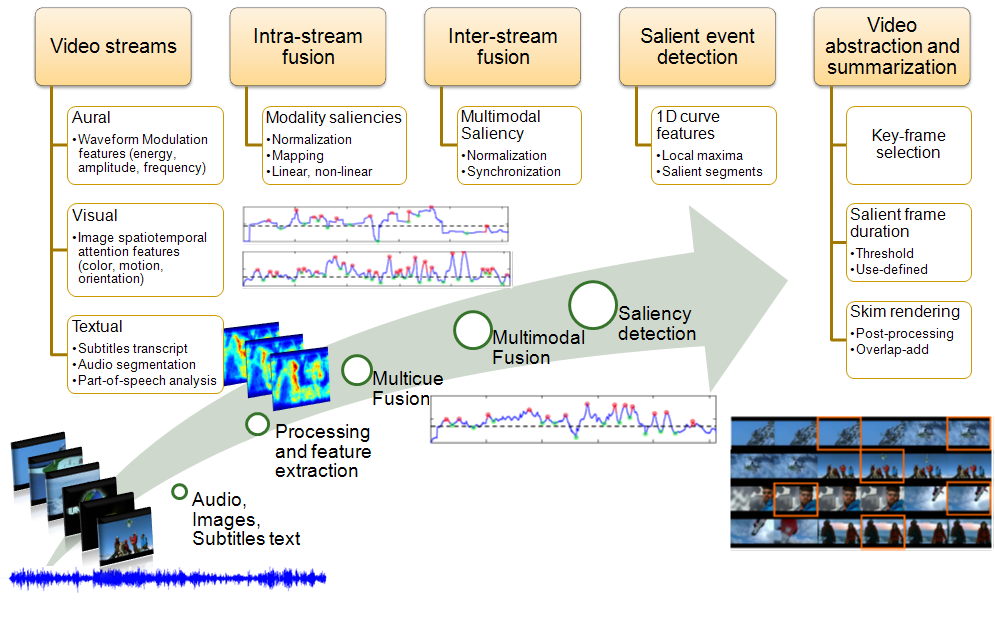

Motivated by the grand challenge to endow computers with human-like abilities for multimodal sensory information processing, perception and cognitive attention, COGNIMUSE will undertake fundamental research in modeling multisensory and sensory-semantic integration via a synergy between system theory, computational algorithms and human cognition. It focuses on integrating three modalities (audio, vision and text) toward detecting salient perceptual events and combining them with semantics to build higher-level stable events through controlled attention mechanisms. Its objectives are:

- Based on improved computer vision and audio/speech processing algorithms, develop novel models and algorithms to form audiovisual perceptual micro-events by optimally fusing audio-visual saliencies and enforcing spatio-temporal coherence while being guided by multisensory psychophysics.

- Information extraction from the language-text modality with emphasis on text saliency, verb-actions, affect, and cross-media semantics between language and the sensory modalities.

- Develop a novel control-theoretic computational model for heterogeneous integration of the audiovisual perceptual micro-events with language-text semantics to form stable integrated meso-events through attention mechanisms.

- Investigate two technology-frontier multimodal applications as test-bed areas and showcases for the advances in the previous general objectives: summarization and attention control in movie videos and TV documentaries or news.

COGNIMUSE will pursue scientific excellence in researching this fascinating field of computational modeling multisensory perceptual and cognitive processes both at the signal and the event level through novel unimodal visual, audio and text processing advances in saliency detection, novel cross-modal and sensory-semantic integration, and a novel heterogeneous control approach to attention mechanisms.